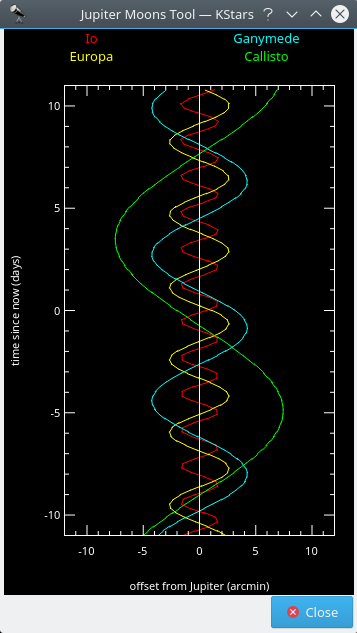

This tool displays the positions of Jupiter's four largest moons (Io, Europa, Ganymede, and Callisto) relative to Jupiter, as a function of time. Time is plotted vertically; the units are days and “time=0.0” corresponds to the current simulation time. The horizontal axis displays the angular offset from Jupiter's position, in arcminutes. The offset is measured along the direction of Jupiter's equator. Each moon's position as a function of time traces a sinusoidal path in the plot, as the moon orbits around Jupiter. Each track is assigned a different color to distinguish it from the others; the name labels at the top of the window indicate the color used by each moon (i.e. red for Io, yellow for Europa, green for Callisto and blue for Ganymede).

The plot can be manipulated with the keyboard. The time axis can be expanded or compressed using the + and - keys. The time displayed at the center of the window can be changed with the [ and ] keys.